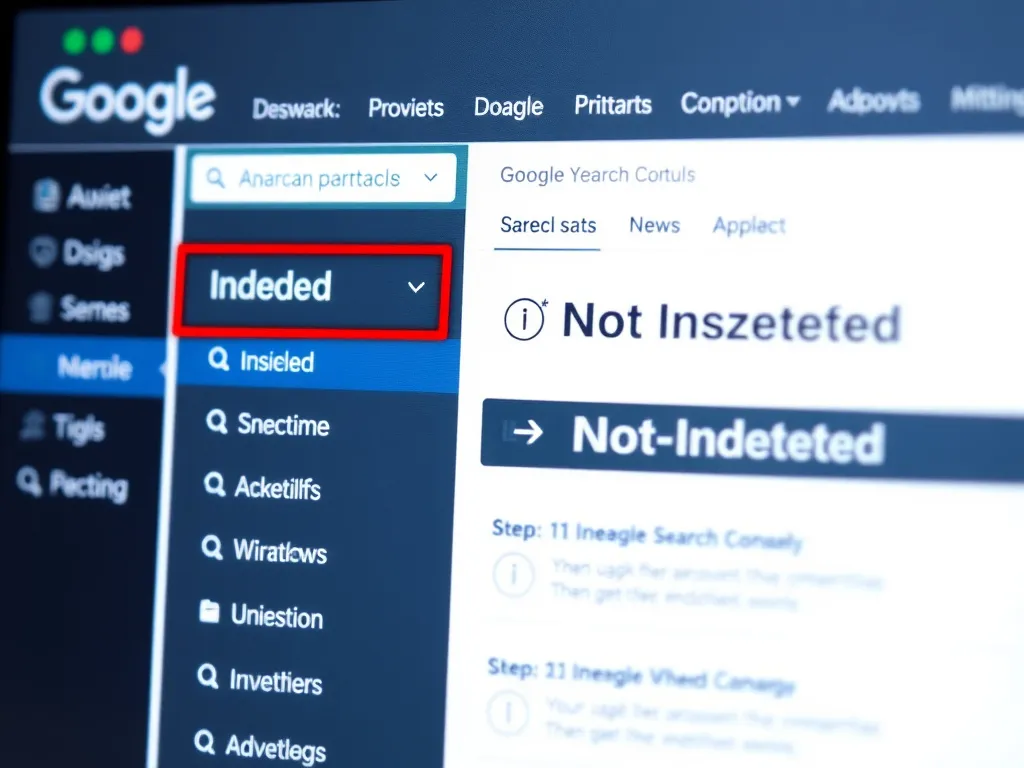

You’ve created a great page, but it still doesn’t show up in Google. Or you check Google Search Console and see messages like “Indexed, not submitted,” “Crawled – currently not indexed,” or “Discovered – currently not indexed.” It’s frustrating—especially when you’re unsure where to start.

This guide explains how to identify and resolve indexing issues using Google Search Console, step by step. We’ll discuss common statuses such as “Crawled – currently not indexed,” explore advanced troubleshooting, and show how to prevent these problems from recurring.

We’ll discuss common statuses such as “Crawled – currently not indexed,” explore advanced troubleshooting, and show how to prevent these problems from recurring.

💡 Pro Tip:

Indexing issues often indicate broader SEO challenges. Use tools like Hunnt AI to monitor site health and gain actionable insights before they become bigger problems.

Why Indexing Issues Matter

If Google doesn’t index a page, it can’t appear in search—no matter how good the content is. Indexing is the first step in SEO. Fixing problems here gives your pages a chance to rank and attract organic traffic.

1. Understand What “Indexing Issues” Really Mean

Before making changes, remember one key point: Google isn’t obligated to index every URL on your site. Indexing depends on quality, relevance, and technical signals.

Think of Googlebot as a librarian. If your book (webpage) is poorly written, hard to find, or duplicates another, the librarian won’t add it to the shelf (Google’s index). Your pages should be:

If your book (webpage) is poorly written, hard to find, or duplicates another, the librarian won’t add it to the shelf (Google’s index). Your pages should be:

- Easy to find: Well-linked and included in your sitemap.

- High-quality: Unique, valuable, and well-structured.

- Technically sound: Free of blocks, errors, or rendering issues.

Indexing issues can arise from:

- Technical blocks (robots.txt, noindex, incorrect canonicals).

- Low-quality or duplicate content.

- Pages Google considers unnecessary or low priority.

- Temporary crawl or server problems.

Google Search Console doesn’t just say “something’s wrong” — it provides specific reasons. Your job is to interpret them and decide if a page should be indexed at all.

2. Start in the Pages (Indexing) Report

In Google Search Console, navigate to Indexing > Pages for your property. You’ll see two main sections:

- Indexed: URLs currently in Google’s index.

- Not indexed: URLs Google knows about but hasn’t indexed (with a reason).

Click “Not indexed” to view categories of issues. Each category reflects a different cause. Below are the most common ones and how to address them.

⚠️ Important:

Not every “Not indexed” page is a problem. Some are meant to be excluded (e.g., admin areas, thank-you pages, or duplicates). Always confirm whether a page should be indexed before modifying it.

3. Common Indexing Statuses and How to Fix Them

3.1 “Crawled – currently not indexed”

Google crawled the page but chose not to index it for now. There’s no technical block; this is usually about quality or priority.

Google might exclude pages because:

- The content is thin or low-value (e.g., placeholders, auto-generated text).

- The page is a duplicate or near-duplicate of another.

- Google doesn’t see the page as important enough (few internal links or backlinks).

- It’s part of a large set of very similar pages (e.g., product variants with minimal differences).

Ask yourself:

- Is the content unique and genuinely helpful?

- Does it overlap heavily with other pages?

- Is it thin or auto-generated?

- Does it have enough internal links?

Ways to increase your chances of indexing:

- Enhance the content: Add depth, examples, visuals, and internal links. Aim to be the best resource on the topic.

- Improve internal linking: Link from stronger, high-authority pages.

- Add external links: If possible, earn reputable backlinks to show importance.

- Check for duplicates: Use tools like Screaming Frog or Ahrefs to find duplicates.

- Request indexing: After improvements, use the URL Inspection Tool to request indexing.

<!-- Example: How to add internal links to improve indexing --><a href="/your-page">Learn more about this topic</a>3.2 “Discovered – currently not indexed”

Google knows the URL exists but hasn’t crawled it yet. This is common on:

- Large sites with many URLs.

- Sites with limited crawl budget due to slow performance or server limits.

- Pages that are hard to reach via internal links.

Crawl budget is how many pages Google will crawl on your site in a given period. If your site has thousands of URLs but limited authority, not all may get crawled.

If your site has thousands of URLs but limited authority, not all may get crawled.

To help Google crawl these pages:

- Improve internal linking: Link important pages from your homepage or high-traffic hubs.

- Include in XML sitemap: Ensure the URL is in your

sitemap.xml. - Optimize server performance: Slow sites waste crawl budget. Use tools like Google PageSpeed Insights to speed things up.

- Reduce duplicate content: Fewer duplicates free up crawl budget for unique pages.

- Request indexing: For high-priority URLs, use the URL Inspection Tool .

3.3 “Alternate page with proper canonical tag”

Google considers your URL a duplicate or alternate version of another and indexes the canonical instead.

Canonical tags tell Google which version is the “main” page. For similar pages (e.g., product colors), use a canonical to point to the primary version.

Check:

- Does the canonical tag point to the correct URL?

- Is the page distinct enough to warrant its own index entry?

If you want the page indexed:

- Set its canonical tag to itself if it’s the main version.

- Eliminate conflicting canonicals in HTTP headers or sitemaps.

- Differentiate the content if it shouldn’t be treated as a duplicate.

<!-- Example: Correct canonical tag --><link rel="canonical" href="https://example.com/main-page" />3.4 “Excluded by ‘noindex’ tag”

This is straightforward: the page has a noindex directive in the HTML or HTTP headers.

noindex tells search engines not to index the page. It’s useful for:

- Admin or login pages.

- Thank-you pages after forms.

- Duplicate or low-value content.

If you want the page indexed:

- Remove the

noindextag from the HTML or headers. - Check whether plugins or rules add it automatically (e.g., WordPress SEO plugins).

- Resubmit the URL for indexing afterward.

<!-- Example: Remove noindex tag --><meta name="robots" content="index, follow" />If the page is intentionally noindexed (e.g., admin pages or thin archives), you can ignore this status.

3.5 “Blocked by robots.txt”

Your robots.txt file is preventing Googlebot from crawling the page. If a page can’t be crawled, it usually won’t be indexed.

The robots.txt file tells search engines what they can and can’t crawl. It’s located at https://yourdomain.com/robots.txt .

To fix:

- Open

/robots.txton your domain. - Look for

Disallowrules affecting the URL path. - Remove or adjust those rules if the block wasn’t intended.

# Example: robots.txt fileUser-agent: *Disallow: /private/ # Blocks crawling of /private/ directoryAllow: /public/ # Allows crawling of /public/ directory Keep truly sensitive or low-value areas (like /wp-admin/ or internal tools) blocked as needed.

3.6 “Page with redirect”

Redirecting URLs typically aren’t indexed; the destination page is what matters. If many pages are marked “Page with redirect,” consider:

- Are these legacy URLs you redirected on purpose? If so, that’s fine.

- Are users or internal links still hitting old URLs unnecessarily?

Redirects are helpful for:

- Moving content to a new URL.

- Fixing broken links.

- Consolidating duplicates.

Tidy up internal links to point directly to the final URL whenever possible. This cuts down on unnecessary redirects and improves crawl efficiency.

3.7 Other Common Indexing Statuses

3.7.1 “Soft 404”

A “Soft 404” occurs when a page returns a 200 (OK) status but has so little content that it looks like a 404 error page.

How to fix:

- Add meaningful content.

- If the page shouldn’t exist, return a proper 404 or 410.

- Use the URL Inspection Tool to verify.

3.7.2 “Blocked due to unauthorized request (401)”

Googlebot couldn’t access the page because it requires authentication (e.g., a login).

How to fix:

- Remove authentication for public pages.

- If it should remain private, exclude it with

noindex.

3.7.3 “Not found (404)”

The page returns a 404, meaning it doesn’t exist. If it was intentionally removed, that’s fine. If not:

- Restore it if it was deleted by mistake.

- Use a 301 redirect to a relevant page if the content moved.

4. Use the URL Inspection Tool for Page-Level Debugging

For any URL, use the URL Inspection tool in Search Console:

- Paste the URL into the top bar in Search Console.

- Check the “Indexing” section to confirm its status.

- Click “View crawled page” (if available) to see Googlebot’s view and spot rendering issues.

- Review “Page availability” and “Enhancements” for more clues (e.g., mobile usability, structured data).

After fixing issues, click “Request indexing” . This prompts a recrawl but doesn’t guarantee indexing.

💡 Pro Tip:

Use the “Live Test” in the URL Inspection Tool to see real-time rendering by Googlebot—especially useful for JavaScript-heavy pages.

5. Prioritize Which Indexing Issues to Fix First

Not every URL deserves the same effort. Instead of trying to “fix everything”:

- Start with key landing pages: Focus on pages that drive traffic, leads, or revenue.

- Prioritize high-value content: Blog posts, product pages, or service pages aligned with your goals.

- Ignore low-value pages: Admin pages, duplicates, or thin pages that don’t need indexing.

Use this checklist to rank fixes:

- ✅ Is the page driving traffic or conversions? (Check Google Analytics)

- ✅ Is it linked from your homepage or key hub pages?

- ✅ Does it offer unique, high-quality content?

- ✅ Is it free of technical blocks (e.g., noindex, robots.txt)?

6. Advanced Troubleshooting for Complex Indexing Issues

Sometimes indexing issues arise from more complex causes like JavaScript rendering, dynamic URLs, or server problems. Here’s how to address them:

6.1 JavaScript-Rendered Content

Googlebot can struggle with heavy JavaScript, which can block indexing. To diagnose:

- Use the URL Inspection Tool to see the rendered page.

- Compare rendered HTML with source to spot missing content.

- Use server-side rendering (SSR) or dynamic rendering to improve crawlability.

6.2 Dynamic URLs and Parameters

Dynamic URLs (e.g., example.com/page?id=123 ) can create duplicates or waste crawl budget. To fix:

- Configure URL parameters in Google Search Console.

- Use canonical tags to point to the main page.

- Avoid parameters for navigation (e.g.,

?sort=price); prefer static URLs.

6.3 Server-Side Issues

Slow or misconfigured servers can limit crawling. To diagnose:

- Review server logs to see Googlebot activity.

- Use tools like Google PageSpeed Insights to find performance bottlenecks.

- Consider upgrading hosting or using a content delivery network (CDN) .

7. Prevent Indexing Problems Before They Start

Fixing is good—preventing is better. Build these habits:

7.1 Plan Your Site Structure

A clear site structure helps Googlebot crawl and index. Best practices:

- Use a logical hierarchy (e.g., Homepage > Category > Subcategory > Page).

- Keep important pages within 3 clicks of the homepage.

- Use breadcrumb navigation to strengthen internal links.

7.2 Avoid Thin or Duplicate Content

Google favors unique, high-quality content. Avoid:

- Auto-generated content (e.g., boilerplate product descriptions).

- Thin pages with little value (e.g., placeholders).

- Duplicate content across multiple URLs.

7.3 Use a Clean XML Sitemap

Your XML sitemap should list only indexable, high-value URLs. Follow these tips:

- Submit your sitemap in Google Search Console .

- Exclude

noindexpages, redirects, and duplicates. - Update it regularly as pages change.

<!-- Example: XML sitemap --><urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9"> <url> <loc>https://example.com/page1</loc> <lastmod>2023-10-01</lastmod> <changefreq>weekly</changefreq> <priority>0.8</priority> </url> <url> <loc>https://example.com/page2</loc> <lastmod>2023-10-02</lastmod> <changefreq>monthly</changefreq> <priority>0.5</priority> </url></urlset>7.4 Monitor Your Site Regularly

Use tools to catch indexing issues early:

- Google Search Console: Enable alerts for new indexing issues.

- Screaming Frog: Audit for technical SEO problems.

- Hunnt AI: Get real-time insights and indexing recommendations.

8. Common Mistakes to Avoid When Fixing Indexing Issues

Even experienced SEOs slip up. Watch out for:

- Overusing noindex tags: Don’t exclude pages you want to rank.

- Blocking key pages in robots.txt: Avoid disallowing index-worthy content.

- Ignoring crawl budget: Large sites must optimize crawl efficiency.

- Incorrect canonical tags: Double-check they point to the right URL.

- Not validating fixes: Use the URL Inspection Tool to confirm changes.

- Submitting too many URLs at once: Prioritize high-value pages first.

9. Case Study: Fixing a Large-Scale Indexing Issue

Here’s a real-world look at solving a large-scale indexing problem.

The Problem

An e-commerce site with 50,000+ product pages saw 30% labeled “Crawled – currently not indexed” in Google Search Console, costing potential organic traffic and revenue.

The Diagnosis

Using Google Search Console and Screaming Frog, the team found:

- Many product pages had thin or duplicate content .

- Internal linking was poorly structured , limiting discovery.

- The site had a limited crawl budget due to slow server responses.

The Fix

They implemented these steps:

- Improved content quality: Added unique descriptions, images, and videos to product pages.

- Optimized internal linking: Strengthened links from categories to products and refined navigation.

- Reduced duplicate content: Applied canonicals to consolidate similar products.

- Improved server performance: Upgraded hosting and added a CDN to cut load times.

The Results

After 3 months, the site achieved:

- A 40% increase in indexed pages .

- A 25% increase in organic traffic .

- A 15% increase in conversions from organic search.

10. How to Request Indexing for Multiple Pages at Once

Submitting many pages one by one is slow. Speed it up with these options:

10.1 Using the Google Search Console API

The GSC API lets you programmatically submit URLs for indexing—useful for large sites with thousands of pages.

# Example: Python script to submit URLs via GSC APIimport requestsurl = "https://www.googleapis.com/webmasters/v3/sites/[SITE_URL]/urlInspection/index:inspect"payload = { "inspectionUrl": "https://example.com/page-to-index", "siteUrl": "https://example.com/"}headers = { "Authorization": "Bearer [YOUR_ACCESS_TOKEN]", "Content-Type": "application/json"}response = requests.post(url, json=payload, headers=headers)print(response.json())10.2 Using Third-Party Tools

Tools like Bulk Indexing Checker or IndexMeNow can help submit multiple URLs at once.

11. What to Do If Google Still Won’t Index Your Page

If nothing’s working and Google still won’t index a page, try these steps:

- Double-check for technical issues: Use the URL Inspection Tool to rule out blocks or errors.

- Improve content quality: Add depth, examples, and visuals to increase value.

- Build external links: Earn reputable backlinks to signal importance.

- Wait and monitor: Sometimes indexing takes time—track the page in GSC.

- Consider removing the page: If it’s low-quality or unnecessary, indexing may not be worth it.

12. The Role of Internal Linking in Indexing

Internal links help Googlebot discover and prioritize content. Follow these best practices:

Follow these best practices:

- Link from high-authority pages: Pages with more backlinks or traffic pass more value.

- Use descriptive anchor text: Replace “click here” with text that describes the target (e.g., “learn more about SEO indexing”).

- Avoid orphan pages: Ensure every page has at least one internal link.

- Use a logical hierarchy: Link from categories to subcategories to individual pages.

13. XML Sitemaps: Best Practices for Indexing

An XML sitemap helps Google discover and index pages faster. Best practices include:

Best practices include:

- Include only indexable URLs: Exclude

noindexpages, redirects, and duplicates. - Update regularly: Add new pages as soon as they’re published.

- Use priority tags wisely: Assign higher priority to critical pages (e.g., homepage, key landers).

- Submit to Google Search Console: Ensure Google knows about your sitemap.

- Validate your sitemap: Use tools like XML Sitemap Validator to catch errors.

14. How to Monitor Indexing Issues Proactively

It’s easier to prevent indexing issues than to fix them later. Monitor proactively by:

14.1 Set Up Alerts in Google Search Console

Google Search Console can email you when new indexing issues appear. To enable:

- Go to Settings > Preferences in GSC.

- Turn on email notifications for indexing issues.

14.2 Use Third-Party Tools

Tools like Screaming Frog , Sitebulb , or Hunnt AI can monitor indexing issues in real time.

14.3 Regularly Audit Your Site

Run a full SEO audit at least quarterly to catch issues early. Focus on:

- Technical SEO (e.g., crawlability, rendering, performance).

- Content quality (e.g., uniqueness, depth, relevance).

- Internal linking (e.g., orphan pages, anchor text).

15. Conclusion

Indexing statuses in Google Search Console are signals and explanations, not just errors. Once you understand each status, you can decide: Should this URL be indexed? If yes, is the blocker content quality, site structure, or a technical rule?

Treat the Pages report as an ongoing health check, not a one-off task. With clear priorities, quality content, and a solid technical base, most indexing issues become manageable and predictable.

Remember:

- Not all “Not indexed” pages are a problem—some are intentional.

- Focus on high-value pages first.

- Use the URL Inspection Tool for page-level debugging.

- Monitor your site proactively to catch issues early.

- Leverage tools like Hunnt AI to automate monitoring and get actionable insights.

🎯 Next Steps:

1. Open Google Search Console and check your Pages (Indexing) Report .

2. Identify the most common indexing issues on your site.

3. Prioritize fixes based on business impact.

4. Implement the fixes and request indexing for high-priority pages.

5. Set up monitoring to prevent future issues.

FAQs About Indexing Issues in Google Search Console

Q1: Why does Google say “Crawled – currently not indexed” for my page?

Google crawled your page but chose not to index it due to quality or relevance concerns. Improve the content, add internal links, and request indexing again.

Q2: How long does it take for Google to index a page after requesting indexing?

It can take from a few days to a few weeks after a request. There’s no guaranteed timeline—it depends on Google’s crawl schedule and the page’s quality.

Q3: Can I force Google to index my page?

No. You can’t force indexing, but you can improve your odds by ensuring the page is high-quality, well-linked, and technically accessible. Requesting indexing can speed up recrawling.

Q4: What’s the difference between “Crawled – currently not indexed” and “Discovered – currently not indexed”?

“Crawled – currently not indexed” means Google crawled the page but chose not to index it. “Discovered – currently not indexed” means Google knows about it but hasn’t crawled it yet, often due to crawl budget constraints.

Q5: How can I check if my page is indexed in Google?

You can check by:

- Using the URL Inspection Tool in Google Search Console.

- Searching

site:yourdomain.com/your-pagein Google. - Using third-party tools like Ahrefs or Moz .

Q6: What should I do if my page is indexed but not ranking?

If it’s indexed but not ranking, focus on:

- Improving content quality and relevance.

- Building high-quality backlinks.

- Optimizing on-page SEO (e.g., meta tags, headings, internal links).

- Boosting page speed and mobile-friendliness.

Glossary of Key Terms

- Crawl Budget: The number of pages Googlebot will crawl on your site within a given timeframe.

- Canonical Tag: An HTML tag that tells Google which version of a page is the “main” one to avoid duplicate content issues.

- Noindex Tag: An HTML tag that tells Google not to index a page.

- Robots.txt: A text file that tells search engines which pages or directories they can or cannot crawl.

- XML Sitemap: A file that lists all the pages on your site to help search engines discover and index them.

- Orphan Page: A page with no internal links pointing to it, making it hard for Googlebot to discover.

- Soft 404: A page that returns a 200 (OK) status code but contains little or no content, making it appear like a 404 error page.